It does not refer to that humidity is not equal to 65! * The statement above refers to that what would branch of decision tree be for less than or equal to 65, and greater than 65. We would calculate the gain or gain ratio for every step. Now, we need to iterate on all humidity values and seperate dataset into two parts as instances less than or equal to current value, and instances greater than the current value. Firstly, we need to sort humidity values smallest to largest. Threshold should be a value which offers maximum gain for that attribute. C4.5 proposes to perform binary split based on a threshold value. GainRatio(Decision, Outlook) = Gain(Decision, Outlook)/SplitInfo(Decision, Outlook) = 0.246/1.577 = 0.155 Humidity AttributeĪs an exception, humidity is a continuous attribute. We need to convert continuous values to nominal ones. There are 5 instances for sunny, 4 instances for overcast and 5 instances for rain If you wonder the proof, please look at this post.Įntropy(Decision|Outlook=Rain) = – p(No). Notice that log 2(0) is actually equal to -∞ but assume that it is equal to 0. 3 of them are concluded as no, 2 of them are concluded as yes.Įntropy(Decision|Outlook=Sunny) = – p(No). Entropy(Decision|Outlook=Overcast) – p(Decision|Outlook=Rain). Entropy(Decision|Outlook=Sunny) – p(Decision|Outlook=Overcast). Gain(Decision, Outlook) = Entropy(Decision) – p(Decision|Outlook=Sunny). Gain(Decision, Outlook) = Entropy(Decision) – ∑ ( p(Decision|Outlook). Its possible values are sunny, overcast and rain. GainRatio(Decision, Wind) = Gain(Decision, Wind) / SplitInfo(Decision, Wind) = 0.049 / 0.985 = 0.049 Outlook Attribute There are 8 decisions for weak wind, and 6 decisions for strong wind. 2 of them are concluded as no, 6 of them are concluded as yes.Įntropy(Decision|Wind=Weak) = – p(No). Gain(Decision, Wind) = Entropy(Decision) – + Gain(Decision, Wind) = Entropy(Decision) – ∑ ( p(Decision|Wind).

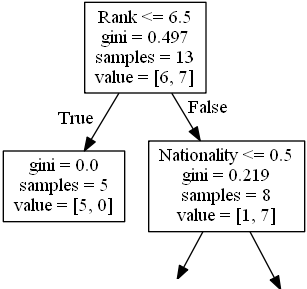

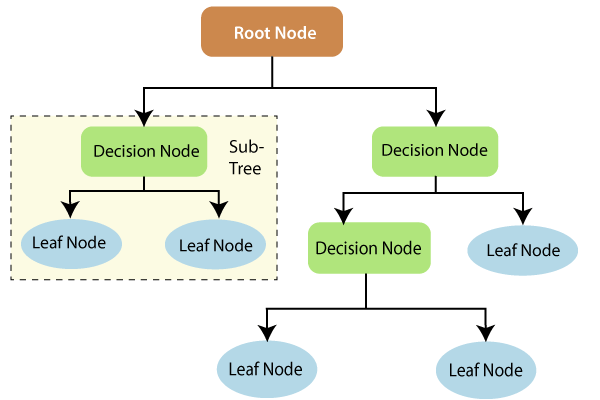

SplitInfo(A) = -∑ |Dj|/|D| x log 2|Dj|/|D| Wind Attribute Here, we need to calculate gain ratios instead of gains. In ID3 algorithm, we’ve calculated gains for each attribute. There are 14 examples 9 instances refer to yes decision, and 5 instances refer to no decision.Įntropy(Decision) = ∑ – p(I). Firstly, we need to calculate global entropy. We will do what we have done in ID3 example. The difference is that temperature and humidity columns have continuous values instead of nominal ones. The dataset might be familiar from the ID3 post. It informs about decision making factors to play tennis at outside for previous 14 days. We are going to create a decision table for the following dataset. □♂️ You may consider to enroll my top-rated machine learning course on Udemy Then, they add a decision rule for the found feature and build an another decision tree for the sub data set recursively until they reached a decision. They all look for the feature offering the highest information gain. No matter which decision tree algorithm you are running: ID3, C4.5, CART, CHAID or Regression Trees. Here, you should watch the following video to understand how decision tree algorithms work. Groot appears in Guardians of Galaxy and Avengers Infinity War Vlog Actually, it refers to re-implementation of C4.5 release 8. Additionally, some resources such as Weka named this algorithm as J48. Now, the algorithm can create a more generalized models including continuous data and could handle missing data. Here, Ross Quinlan, inventor of ID3, made some improvements for these bottlenecks and created a new algorithm named C4.5. Attributes must be nominal values, dataset must not include missing data, and finally the algorithm tend to fall into overfitting. Here, ID3 is the most common conventional decision tree algorithm but it has bottlenecks. Decision trees are still hot topics nowadays in data science world.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed